Visit

0 upvotes

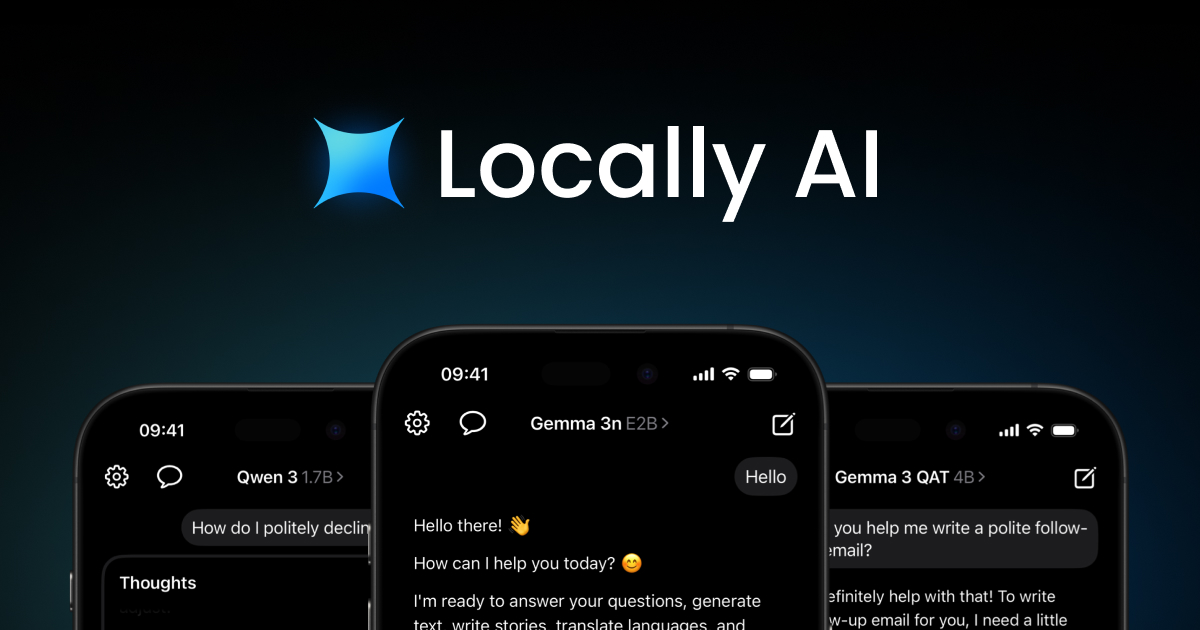

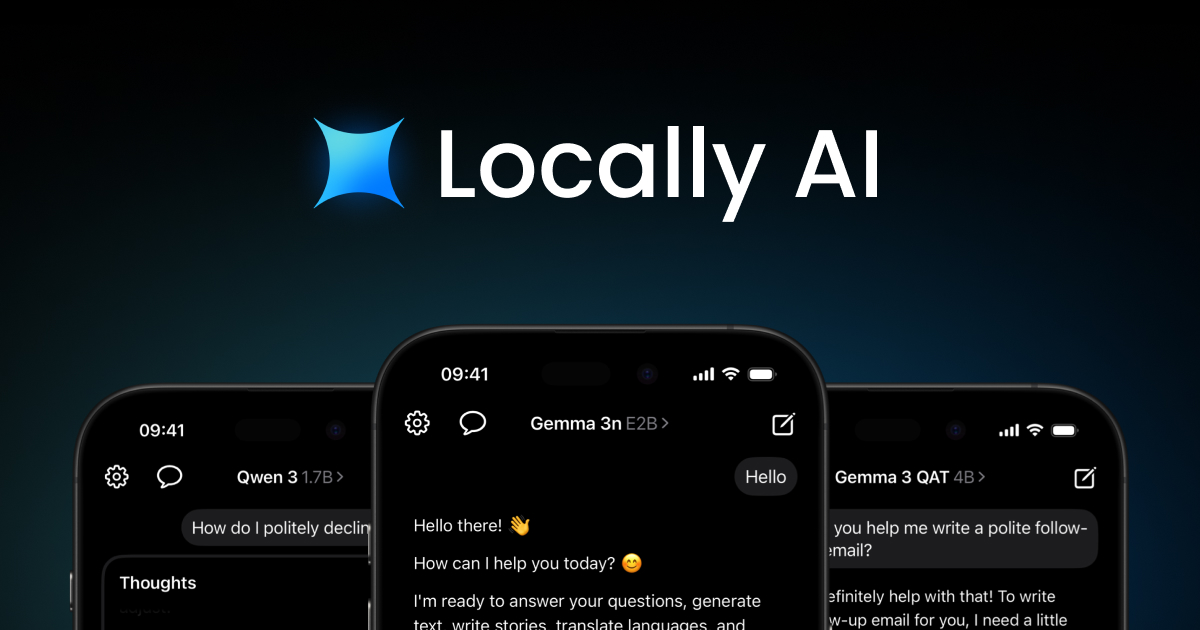

Locally AI runs AI models completely offline on iPhone, iPad, and Mac devices. It offers uncompromising privacy with no internet connection required and is optimized for Apple Silicon chips.

Locally AI enables users to run popular open-source AI models directly on their Apple devices without requiring an internet connection. The application processes all data locally, ensuring complete privacy and security while delivering powerful AI capabilities.

Key features include support for multiple AI models including Meta Llama, Google Gemma, Qwen, DeepSeek, and others. The app offers local voice mode for natural conversations, integration with Siri for voice commands, customizable system prompts, and seamless integration with Apple's Control Center and Shortcuts app.

The application leverages Apple's MLX machine learning framework to optimize performance on Apple Silicon chips. This unified memory architecture allows for efficient model loading and processing while consuming less power, resulting in smooth performance across iPhone, iPad, and Mac devices.

Benefits include complete privacy since data never leaves the device, offline functionality without internet requirements, and optimized performance tailored specifically for Apple hardware. Users can run advanced AI models locally for various tasks including conversation, reasoning, and image processing.

The target audience includes Apple device users who prioritize privacy and want to run AI models locally. The app supports recent iPhone, iPad, and Mac models with Apple Silicon chips and integrates with Apple's ecosystem including Siri, Control Center, and Shortcuts.

Key Features

- •Run AI models completely offline without internet connection, ensuring all processing happens locally on your device for maximum privacy and security.

- •Support for multiple open-source models including Meta Llama, Google Gemma, Qwen, DeepSeek, and others optimized specifically for Apple Silicon chips.

- •Local voice mode enables natural conversations using on-device processing without requiring cloud services or external connections.

Publisher

A

admin

Launch Date2026-03-05

Platformmobile

Pricingfreemium